Digital twins

Decentralized patients recruitment

Real-World Data

Passive Monitoring

Automated patient engagement

We've treated thousands of patients; built cutting edge digital health

platforms, and done billions of dollars in financial transactions in healthcare

We bet big on game changing teams and technologies in healthcare

Invest across digital health platforms

Early to mid-stage companies

Value-add with healthcare industry expertise

B2B, B2C, B2B2C, B2C2B models

Founder-centric partnership model

Significant follow-on capital

Invest across digital health platforms

B2B, B2C, B2B2C, B2C2B models

Value-add with healthcare industry expertise

Early to mid-stage companies

Founder-centric partnership model

Significant follow-on capital

Create funding plan that allows pursuit of aggressive growth plans

Facilitate introductions to a vast network of healthcare stakeholders

Share key lessons from past digital health product adoptions

Work with management team to optimize business model

Leadership

We have experienced the challenges of healthcare technology adoption. This makes us the ideal investors for innovative digital health entrepreneurs

Al in Healthcare Blog

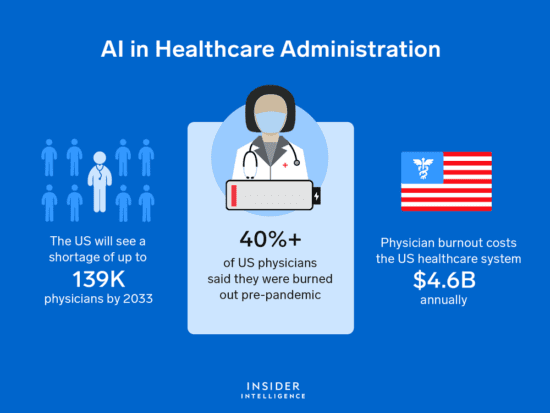

It’s estimated that about 25% of this $4 trillion is due to administration, which means that we spend...

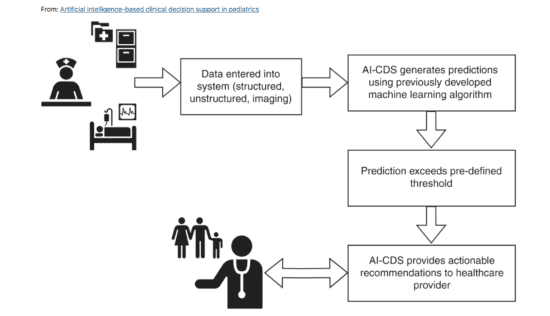

In a conversation with Kang Zhang, MD, one of the pioneers in the applications of healthcare AI, he...

As with clinical trials, regulatory submissions, pharmacovigilance, communicating with providers and patients, and monitoring social media for adverse...

Media

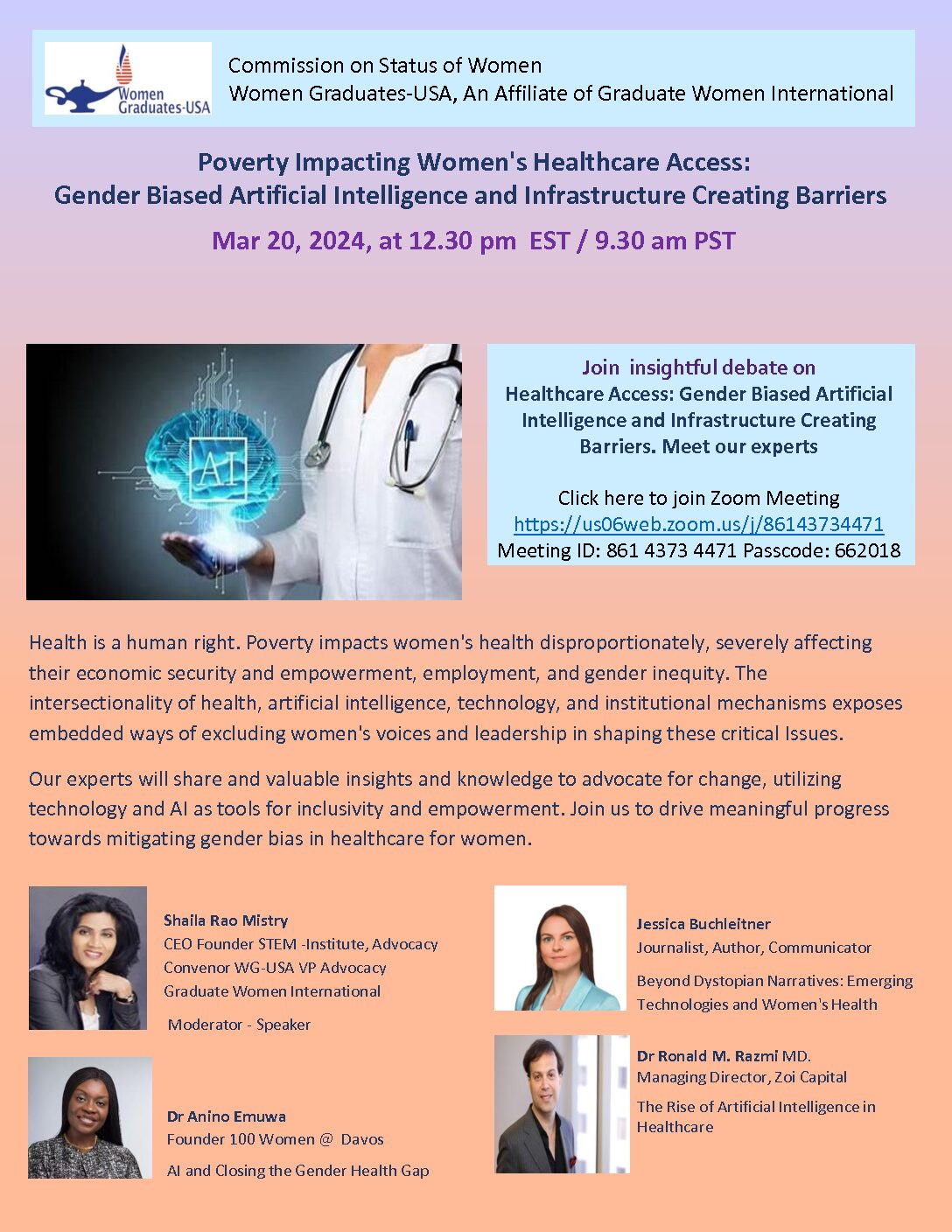

Poverty Impacting Women’s Healthcare Access: Gender Biased Artificial Intelligence and Infrastructure Creating Barriers

Poverty Impacting Women's Healthcare Access: Gender Biased Artificial Intelligence and Infrastructure Creating Barriers Mar 20, 2024, at 12.30...

Health AI: Hype vs Reality

A discussion of fact vs fiction in the applications of Artificial Intelligence in healthcare at the annual Wall...

Emerging Innovations in Healthcare #2: Mental Health (HYBRID)

Emerging Innovations in Healthcare (EIH) is a hybrid conference series by Mount Sinai Innovation Partners (MSIP), the commercialization arm of the Mount...